How To

This guide covers how to get Vagrant and Ansible running together on Windows 10 using WSL (Windows Subsystem for Linux), such that they use VirtualBox on the Windows host.

| Action | Steps |

|---|---|

| Install Git on Windows |

|

| Install VirtualBox on Windows |

|

| Install WSL on Windows 10 Pro |

|

| Install Ubuntu 18.04 LTS from Windows Store |

|

| Configure VS Code to use WSL in the Integrated Terminal |

|

| Install Python and PIP in WSL |

|

| Install Ansible in WSL |

|

| Install Vagrant in WSL |

|

| Configure Vagrant in WSL to Use VirtualBox on Windows |

|

| Clone my Demo GitHub Repository |

|

| Start the VM in VirtualBox using Vagrant |

|

| Verify VM was Provisioned |

|

| Stop and Destroy the VM |

|

Helpful Links

- https://docs.microsoft.com/en-us/windows/wsl/install-win10

- https://pip.pypa.io/en/stable/installing/

- https://www.vagrantup.com/downloads.html

- https://www.vagrantup.com/docs/other/wsl.html

- https://www.digitalocean.com/community/tutorial_series/getting-started-with-configuration-management

- https://github.com/erikaheidi/cfmgmt

This article is just a collection of my personal notes while I work through using OpenStack in my new role.

General

OpenStack components can be divided into control, network, and compute.

- Control runs API services, web interfaces, databases, and message bus.

- Network runs service agents for networking.

- Compute is the virtualization hypervisor.

All components use a database and/or a message bus.

- Databases can be MySQL, MariaDB, or PostgreSQL.

- Message buses can be RabbitMQ, Qpid, and ActiveMQ.

Everything in OpenStack must exist in a tenant/project.

Tenants/projects are simply groupings of objects; and users, instances, networks, etc. are examples of objects.

In order to launch an instance, four components are essential:

- Keystone

- Glance

- Neutron

- Nova

Keystone

Keystone manages tenants/projects, users, roles, and catalogs services and endpoints for all components running in a cluster.

- Users must be granted a role in a tenant/project.

- A user cannot log in to a cluster unless they are a member of a tenant/project.

- Even the users the OpenStack components use to communicate with each other have to be members of a tenant to be able to authenticate.

Keystone uses username & password authentication to request tokens, and then uses these tokens for future requests.

Glance

Glance is the image management component.

Before a compute node instance is started, a Glance image is copied and cached onto the ephemeral disk of the new instance.

Glance images are “sealed-disk” images that have had things like SSH host keys and MAC addresses removed.

- This is done so that they can be used repeatedly without interfering with each other.

- Missing host specific information is generated at boot time via a post booting configuration facility named cloud-init.

Cloud-init is a script that is ran post boot to connect back to a metadata service.

Glance images can be found online by searching for a distributions’ name combined with “cloud image”.

To save time, custom images can be created with software “baked” into it. For example, if a LAMP or MEAN stack was required, that software can be pre-installed in the cloud image to reduce time spent setting those items up after deploying the instances.

Custom images can be made by configuring a virtual machine manually. Host specific information is removed, cloud-init is included, and an image is created.

Alternative tools for creating custom cloud images are:

- virt-install

- Oz

- appliance-creator

Neutron

Neutron is the network management component.

Neutron provides an API frontend that manages the SDN (Software Defined Networking) infrastructure.

Using Neutron allows each tenant/project to have its own virtual isolated network.

Each isolated network can be connected to a virtual router to create routes between them.

A virtual router can have an external gateway connected to it — so that when each instance has a floating IP it can have external access to a larger network or the internet.

Traffic sent to a floating IP address is routed through Neutron to an active/launched instance.

The capabilities of Neutron can be referred to as NaaS (Network as a Service).

A hard definition for NaaS would be: “Providing networks and network resources on demand via software.”

Open vSwitch (a virtually managed switch) is a commonly used virtualized networking infrastructure.

Physically managed switches can also be used in place of virtual ones to handle the virtual networks managed by Neutron.

Nova

Nova is the instance management component.

An SSH key pair and a security group must be configured before launching a Nova instance.

A security group is a firewall at the cloud infrastructure layer. It prevents access from anything not within the same security group from making connections outside of the security group.

General (cont.)

Once Keystone has tenants & roles, Glance has a cloud image, a Neutron network is created, an SSH key pair has been made, and a security group is established, a new Nova instance can be started.

The following are the steps taken when a new Nova instance is being launched:

- Resource identifiers are provided to Nova.

- Nova reviews what resources are being used on each hypervisor.

- Nova schedules a new instance to spawn on a compute node.

- The compute node gets a Glance image, creates all necessary virtual network devices, and boots the instance.

- During boot, cloud-init is run and connects to the metadata service.

- The metadata service provides the SSH public key needed for SSH logins to the instance.

- Any post boot configurations are ran.

Cinder

Cinder is the block storage management component.

Volumes can be created and attached to instances.

Block devices can be partitioned; and have filesystems created, formatted, and mounted on them.

Cinder handles snapshots as well.

Several storage backends can be used with Cinder to store volumes and snapshots:

- LVM (Logical Volume Manager)

- GlusterFS

- Ceph

Swift

Swift is the object storage management component.

Object storage is a simple content-only storage system — files are stored without the metadata that a block filesystem uses.

Two layers are used as part of Swift’s deployment:

- Proxy

- Storage Engine

The proxy is an API layer — it communicates with the storage engine on the user’s behalf.

The default storage engine is the Swift storage engine, but GlusterFS and Ceph can also be used.

Ceilometer

Ceilometer is the telemetry component.

It collects resource measurements and is able to monitor the cluster.

It was originally designed for billing users by metering the system, but it evolved into a general purpose telemetry system.

A meter is called a “sample“.

Samples get recorded on a regular basis; and a collection of samples is called a “statistic“.

Statistics can give insights into how resources are being used on an OpenStack deployment.

Alarms can be made with the samples.

Heat

Heat is the orchestration component.

Orchestration is the process of launching multiple instances that are supposed to work together.

Templates are used to define what will be launched during orchestration.

Heat is compatible with AWS CloudFormation templates and implements additional features beyond their template language.

When a template is launched it creates a collection of virtual resources (instances, networks, storage devices, etc.) — referred to as a “stack“.

It is important to note that Heat is not CM (configuration management). It is used for orchestration.

Heat can execute simple post boot configurations, such as invoking an actual CM to do more complex configurations.

RDO using TripleO

One of the easiest ways to get started using OpenStack is to use RDO (http://rdoproject.org/).

TripleO stands for “OpenStack on OpenStack”; and it is intended to deploy an all-in-one OpenStack installation, which is then used as a provisioning tool for a multi-node OpenStack deployment.

The two OpenStacks are called the undercloud and the overcloud.

The undercloud is the baremetal management all-in-one OpenStack installation.

The overcloud is the target deployment of OpenStack that is meant to be provided to end users.

The undercloud can take a cluster of nodes provided to it and deploy the overcloud on them.

Preface

These are my sloppy personal notes as I prepare to take the final Linux+/LPIC1 exam. This will be replaced (in time) with better notes.

Display Managers

LightDM

- LightDM’s main configuration file is

/etc/lightdm/lightdm.conf:- The main section is:

[SeatDefaults] - Common options within this section include:

greeter-session=name— sets a greeter (i.e. a welcome screen).- The

namevalue is the name of the*.desktopfile in the/usr/share/xgreeters/directory.

- The

user-session=name— sets a user environment (i.e. a desktop environment).- The

namevalue is the name of the*.desktopfile in the/usr/share/xsessions/directory.

- The

allow-guest=true— enables guest login.greeter-show-manual-login=true— shows a field to type in a username.greeter-hide-users=true— hides user selection.autologin-user=user— automatically logs in as user.autologin-user-timeout=30— automatic logs in after 30 seconds.

/etc/lightdm/lightdm.conf.d/contains many sub-configuration files.

- The main section is:

GDM3

- Main configuration file is

/etc/gdm3/custom.conf:[daemon]section:AutomaticLoginEnable=True— enables automatic login.AutomaticLogin=user— auto login as user.WaylandEnable=false— disables Wayland and uses Xorg X11 instead.

[security]section:DisallowTCP=false— re-enables TCP, useful for X11Forwarding without SSH.

X

- Protocol is XDMCP (X Display Manager Control Protocol)

on port 177 UDP incomming and ports 6000-6005 TCP bidirectional. - Five XDMCP servers are common:

- XDM

- KDM

- GDM

- LightDM

- MDM

xorg.conf file

- Major sections

- Module

- InputDevice

- Monitor

- Device

- Screen

- ServerLayout

- Module handles loading X server modules via Load.

- InputDevice configures the keyboard and mouse.

- Identifier is a label.

- Driver is the driver to be used for the device (ex.

kbd, mouse, Keyboard, evdev, etc.). - Option sets various options for the device (ex.

Device, Protocol, AutoRepeat, etc.). - Device is usually one of these:

- “/dev/input/mice”

- “/dev/input/mouse1”

- “/dev/usb/usbmouse”

- “/dev/ttyS0”

- “/dev/ttyS1”

- Protocol is the signal X can expect from

the mouse movements and button presses.- “Auto”

- “IMPS/2”

- “ExplorerPS/2”

- “PS/2”

- “Microsoft”

- “Logitech”

- InputDevice configures the keyboard and mouse.

- Monitor section can specify HorizSync, VertRefresh, and

Modeline of the monitor.- Identifier and ModelName can be anything you want.

- HorizSync is in kilohertz (kHz).

- VertRefresh is in hertz (Hz).

- Modeline can be acquired from

cvt <h-resolution> <v-resolution> <refreshRate>. - A new modeline can be added with

xrandr --newmode <modeline>. - Monitor name can be retrieved with `xrandr -q`.

- Device section typically defines the video card being used.

- Identifier, VendorName, and BoardName can be anything

you want. - Driver can be any of the modules that exist in

/usr/lib/xorg/modules/drivers/. VideoRamisn’t necessary to define, but it’s the

amount of RAM in kilobytes.

- Identifier, VendorName, and BoardName can be anything

- Screen section defines the combination of monitor and

video cards being used.- Identifier can be anything.

- Device must match the Identifier from the Device section.

- Monitor must match the Identifier from the Monitor section.

- DefaultDepth is the default SubSection to use based on color depth (32 bit is the greatest depth possible).

- SubSection “Display” defines a display option X may use.

- Depth is the color depth in bits.

- Modes is the modeline (generated by `cvt`)

- EndSubSection completes a subsection.

- ServerLayout section links all the other sections together.

- Identifier can be anything you want.

- Screen is the Identifier(s) in the Screen section.

- InputDevice is the Identifier(s) in the InputDevice

section.

- Files section is used to add fonts and font paths.

- FontPath will define a path to look for fonts.

Fonts

- Font paths can be added in the

xorg.conffile using the Files section, appended withxset fp+ </font/directory>, or prepended withxset +fp </font/directory>. - To have linux re-examine the font path, use

xset fp rehash. - Available fonts may be checked using the

xfontselcommand. - Font servers can be added to the

xorg.confFile section (ex.FontPath "tcp/test.com:7100"). - Default fonts can be adjusted in KDE by typing

systemsettingsin a terminal.

Accessibility

- AccessX was the common method for enabling/editing accessibility options. It has been deprecated but is specifically mentioned on the exam.

- Sticky keys make modifier keys “stick” when pressed, and affect the next regular key to be pressed.

- Can be enabled on GNOME by pressing

shiftkey five times in a row.

- Can be enabled on GNOME by pressing

- Toggle keys play a sound when the locking keys are pressed.

- Mouse keys enables the numpad to act as a mouse.

- Bounce/Debounce keys prevent accidentally pressing a single key multiple times.

- Slow keys require a key to be held longer than a set period of time for it to register a key press.

- Keyboard Repeat Rate determines how quickly a key repeats when held down.

- Time Out sets a time to stop accessibility options automatically.

- Simulated Mouse Clicks can simulate a mouse click whenever the cursor stops moving, or simulate a double click whenever the mouse button is pressed for an extended period.

- Mouse Gestures activate program options by moving your mouse in a specific pattern.

- Sticky keys make modifier keys “stick” when pressed, and affect the next regular key to be pressed.

- GNOME On-Screen Keyboard (GOK) was the onscreen option for GNOME desktop, but has been replaced with Caribou.

- Default fonts can be adjusted in KDE by typing

systemsettingsin a terminal. kmagcan be used to start the KMag on-screen magnifier.- Speech synthesizers for Linux include:

- Orca — integrated in GNOME 2.16+.

- Emacspeak — similar to Orca.

- The BRLTTY project provides a Linux daemon to redirect text-mode console output to a Braille display.

- Since kernel 2.6.26, direct support for Braille displays exists on Linux.

Cron Jobs

- Syntax is:

- Minute of hour (0-59)

- Hour of day (0-23)

- Day of month (1-31)

- Month of year (1-12)

- Day of week (0-7)

0and7are both Sunday.

- Note: Values may be separated by commas or divided by a number (ex.

*/15or0,15,30,45).

/etc/cron.allowdetermines which users are allowed to create cron jobs./etc/cron.denyblocks listed users from creating cron jobs.- System cron jobs are run from the

/etc/crontabfile.- Crontab syntax is:

moh hod dom moy dow user command

- Crontab syntax is:

- Scripts can be placed within the following directories to be automatically processed by the entries in the crontab file:

/etc/cron.hourly//etc/cron.daily//etc/cron.weekly//etc/cron.monthly/

- On Debian systems, any files within the

/etc/cron.d/directory are treated as additional crontab files. - User cron jobs are stored in a file at

/var/spool/cron/crontabs/user. - Use the

crontabcommand to edit the jobs in the /var/spool/cron/crontabs/ directory.-uspecifies the user.-llists all current jobs.-eedits the crontab file.-rremoves the current crontab.-irinteractive prompts for removal

at

atwill execute commands at a specified time.- Do not directly pass a command to the

atcommand.- First enter the

atcommand with a specified time. - An interactive

at>prompt will appear. - Enter all commands desired.

- Press

^dto send the EOF input to complete the job submission.

- First enter the

- Accepts the following time strings:

now|hh am|hh pm + value minutes|hours|days|weekstodaytomorrowHHMMHH:MMMMDD[CC]YYMM/DD/[CC]YYDD.MM.[CC]YY[CC]YY-MM-DD- Examples:

at 4pm + 3 daysat 10am Jul 31at 1am tomorrow

-msend mail to the user when the job completes.-Mnever mail the user.-fread the job from a file.-trun the job at a specific time.- [[CC]YY]MMDDhhmm[.ss]

-llist all jobs queued.- Alias for

atq.

- Alias for

-rremove a job.- Alias for

atrm.

- Alias for

-ddelete a job.- Alias for

atrm.

- Alias for

- Do not directly pass a command to the

atqqueries and lists all jobs currently scheduled and their job IDs.atrmremoves jobs by ID.- Access to the

atcommand can be restricted with/etc/at.allowand/etc/at.deny.

anacron

- Similar to cron but runs periodically when available, rather than at specific times. This makes it useful for systems that are not running continuously.

/var/spool/anacronis where timestamps from anacron jobs are stored.- When

anacronis executed, it reads a list of jobs from a configuration file at/etc/anacrontab.- Each job specifies:

- Period in days

@daily@weekly@monthly- numeric value

1-30

- Delay in minutes

- Unique job identifier name

- Shell commands

- Example:

1 5 cron.daily run-parts --report /etc/cron.daily7 10 cron.weekly run-parts --report /etc/cron.weekly@monthly 15 cron.monthly run-parts --report /etc/cron.monthly

- Period in days

-fforces execution of jobs, regardless of timestamps.-uupdates the timestamps without running.-sserialize jobs — a new job will not start until the previous one is finished.-nnow — run jobs immediately (implies-sas well).-ddon’t fork to background — output informational messages to STDERR, as well as syslog.-qquiet messages to STDERR when using-d.-tspecify a specific anacrontab file instead of the default.-Ttests the anacrontab file for valid syntax.-Sspecify the spooldir to store timestamps in. Useful when running anacron as a regular user.

- Each job specifies:

run-parts

- Executes scripts within a directory.

- Used often in crontab and anacrontab to execute scripts within the cron.daily, cron.weekly, cron.monthly, etc. directories.

Time

- Linux uses Coordinated Universal Time (UTC) internally.

- UTC is the time in Greenwich, England, uncorrect for daylight savings time.

Timezone

- Linux uses the

/etc/localtimefile for information about its local time zone./etc/localtimeis not a plain-text file, and is typically a symlink to a file in/usr/share/zoneinfo/.- Example:

$ ll /etc/localtime

lrwxrwxrwx 1 root root 35 Jun 24 03:02 /etc/localtime -> /usr/share/zoneinfo/America/Phoenix

- Example:

- Debian based distributions also use

/etc/timezoneto store text-mode time zone data. - Redhat based distributions also use

/etc/sysconfig/clockto store text-mode time zone data. - A user can set their individual timezone using the TZ environment variable.

export TZ=:/usr/share/zoneinfo/timezone- std offset can be used in place of

:/usr/share/zoneinfo.- When daylight savings is not in effect.

std offset- Ex.

MST+3

- When daylight savings is in effect:

- std offset dst[offset],start[/time],end[/time]

- Ex.

MST+3EST,M1.19.0/12,M4.20.0/12

- When daylight savings is not in effect.

Locale

- A locale is a way of specifying the machine’s/user’s language, country, and other information for the purpose of customizing displays.

- Locales take the syntax of:

[language[_territory][.codeset][@modifier]]- language is typically a two or three-letter code (

en,fr,ja, etc.) - territory is typically a two letter code (

US,FR,JP, etc.). - codeset is often

UTF-8,ASCII, etc. - modifier is a locale-specific code that modifies how it works.

- language is typically a two or three-letter code (

- Locales take the syntax of:

- The

localecommand can be used to view your current locale.LC_ALLis kind of like a master override — if it is set, all otherLC_*variables are overridden by it.LANGwill be used as a default for anyLC_*variables that are not set.- Setting

LANG=Cprevents programs from passing their output through locale translations.

- Setting

locale -ashows all available locales on the system.

- The

iconvcommand can be used to convert between character sets.iconv -f encoding [-t encoding] [inputfile]...-fis the source encoding.-tis the destination encoding.

- Ex.

iconv -f iso-8859-1 -t UTF-8 german-script.txt

hwclock

hwclockis used to synchronize the hardware clock with the system clock.-r/--showwill show the current hardware clock time:Thu 02 Aug 2018 01:46:09 AM MST -0.329414 seconds

-s/--hctosyswill set system time from hardware clock.-w/--systohcwill set the hardware clock from system time.

date

datedisplays the current date and time.- Accepted datetime format is

MMDDhhmm[[CC]YY][.ss]]-d/--date=sets the date and time.- Defaults to

nowif not used.

- Defaults to

-s/--set=sets time to provided value.-u/--utc/--universalprint or set time in Coordinated Universal Time (UTC).

- Output can be formatted with

date +"%format":%a– abbreviated weekday name (Mon)%A– non-abbreviated weekday name (Monday)%b– abbreviated month name (Jan)%B– non-abbreviated month name (January)%c– locale’s date and time (Thu Aug 2 00:10:29 2018)%C– century (20)%d– day of month (01)%D– date; same as%m/%d/%y(8/2/18)%e– day of month with space padding; same as%_d(01)%F– full date; same as%Y-%m-%d(2018-8-2)%H– hour (00 - 23)%I– hour (01 - 12)%j– day of year (001 - 366)%k– hour with space padding; same as%_H(21)%l– hour with space padding; same as%_I(09)%m– month (01 - 12)%M– minute (00 - 59)%n– newline%N– nanoseconds%p– locale’s equivalent ofAM/PM%P– same as%p, but lowercase%q– quarter of year (1 - 4)%r– locale’s 12 hour clock (12:16:43 AM)%R– 24 hour clock; same as%H:%M(00:16)%s– seconds since January 1st, 1970 UTC%S– second (00 - 60)%t– tab%T– time; same as %H:%M:%S (00:23:53)%u– day of week (1 - 7);1is Monday%U– week number of year, starting on Sunday (00 - 53)%w– day of week (0 - 6);0is Sunday%W– week number of year, starting on Monday (00 - 53)%x– locale’s date representation (8/2/18)%X– locale’s time representation (00:19:43)%y– last two digits of year (00 - 99)%Y– year%z– +hhmm numeric time zone (-0400)%Z– time zone abbreviation (MST)- Example:

date +"%A %B %d, %Y - %I:%M:%S %p"

Thursday August 02, 2018 – 12:30:45 AM

NTP

- The NTP daemon is responsible for querying NTP servers listed in

/etc/ntp.conf.- Example:

server 0.centos.pool.ntp.org iburst

server 1.centos.pool.ntp.org iburst

server 2.centos.pool.ntp.org iburst

server 3.centos.pool.ntp.org iburst

- Example:

- The

ntpdatecommand synchronizes time with the NTP servers but is deprecated in favor ofntpd -gq.- Note:

ntpdmust be stopped in order forntpd -gqto work:ntpd: time slew -0.011339s

- Note:

- The

ntp.driftfile is responsible for adjusting the system’s clock as clock drift occurs, and is typically stored in/var/lib/ntp/or/etc/. - The

ntpqcommand opens an interactive mode for ntpd, with anntpq>prompt.ntpq> peersshows details about the NTP servers in use.refidis the server to which each system is synchronized.stis the stratum number of the server.- Note:

ntpq -p/ntpq --peersfunctions the same without being in an interactive prompt.

ssh

ssh-addis used to add an RSA/DSA key to the list maintained by ssh-agent (ex. ssh-add ~/.ssh/id_rsa).- Enabled port tunneling with ‘

AllowTcpForwarding yes‘.

gpg

- Generated keys are stored in

~/.gnupg.

Printing

- Ghostscript translates PostScript into forms that can be understood by your printer.

- The print queue is managed by the Common Unix Printing System (CUPS).

- Users can submit print jobs using

lpr. - Typically a print queue is located in

/var/spool/cups. lpq -ato display all pending print jobs on local and remote printers.

- qmail and Postfix are modular servers.

newaliasescommand converts the aliases file to a binary format.

logs

logrotatecan be used to manage the size of log files.loggeris the command used to record to the system log.- Start syslogd with the

-roption to enable acceptance of remote machine logs.

bash

/etc/profileis the global configuration file for the bash

shell.

Network Addresses

- IP addresses can be broken into a network address and a computer address based on a netmask / subnet mask.

- Network address is a block of IP addresses that are used by one physical network.

- Computer address identifies a particular computer within that network.

- IPv4 addresses.

- 32 bits (4 bytes), binary.

- Represented as four groups of decimal numbers separated by dots

(ex.192.168.1.1). - Classes are address ranges determined by a binary value of the leftmost digit.

00000001 - 01111111=1 - 127— Class A10000000 - 10111111=128 - 191— Class B11000000 - 11011111=192 - 223— Class C11100000 - 11101111=224 - 239— Class D11110000 - 11110111=240 - 255— Class E- If it starts with a

0= Class A,1= Class B,11= Class C,111= Class D,1111= Class E

- Reserved private address spaces / RFC 1918 addresses are:

- Class A —

10.0.0.0 - 10.255.255.255 - Class B —

172.16.0.0 - 172.31.255.255 - Class C —

192.168.0.0 - 192.168.255.255

- Class A —

- Network Address Translation (NAT) routers can substitute their own IP address on outgoing packets from machines within a reserved private address space; effectively allowing any number of computers to hide behind a single IP address.

- Address Resolution Protocol (ARP) can be used to convert between MAC and IPv4 addresses.

- IPv6 addresses.

- 128 bits (16 byte), hexadecimal.

- Represented as eight groups of 4-digit hexadecimal values separated by colons

(ex.2001:4860:4860:0000:0000:0000:0000:8888). - Two types of network addresses:

- Link-Local

- Nonroutable — can only be used for local network connectivity.

fe80:0000:0000:0000:is the standard for IPv6 interfaces.

- Global

- Uses a network address advertised by a router on the local network.

- Link-Local

- Neighbor Discovery Protocol (NDP) can be used to convert between MAC and IPv6 addresses.

- Netmasks / subnet masks are binary numbers that identify which portion of an IP address is a network address and which part is a computer address.

1= part of the network address.0= part of the computer address.- They can be represented using dotted quad notation or Classless Inter-Domain Routing (CIDR) notation:

- Dotted Quad:

255.255.255.0255=11111111— all eight bits are the network address.0=00000000— all eight bits belong to a computer address.

- CIDR:

192.168.1.1/24- The number after the forward slash represents the number of bits belonging to the network address.

- To convert from

192.168.1.100/27CIDR to dotted quad netmask:27represents the number of bits with a value of1, starting from the left-most digit:11111111 11111111 11111111 11100000

- Convert the binary values of each byte to decimal values:

11111111= 128+64+32+16+8+4+2+1 =25511100000= 128+64+32 =224

- Put all of the decimal values in a dotted quad format:

255.255.255.224

- To convert from

255.255.192.0dotted quad to CIDR:- Convert the decimal values to binary values:

255=11111111192=11000000- Math Tip

- Take

255and subtract192to get63. - Since

63is 1 less than64, all bits below the 64th are1

(i.e.001111111). - Subtract

11111111(binary 255) by this value00111111, to get11000000.

- Take

- Math Tip

0=00000000

- Place the binary values into one 32 bit string:

11111111 11111111 11000000 00000000

- Count the number of digits from the left with a value of

1:18- So the IP would be represented as

xxx.xxx.xxx.xxx/18in CIDR notation.

- Convert the decimal values to binary values:

- Dotted Quad:

- Media Access Control (MAC) addresses represent unique hardware addresses.

- 48 bits (6 bytes), hexadecimal.

- A broadcast query is sent out to all computers on a local network and asks a machine with a given IP address to identify itself. If the machine is on the network it will respond with its hardware address, so the TCP/IP stack can direct traffic for that IP to the machine’s hardware address.

- Dynamic Host Configuration Protocol (DHCP).

ipandifconfigcan both be used to add a new IPv6 address to a network interface.ifconfig promiscconfigures the interface to run in promiscuous mode — receiving all packets from the network regardless of the packet’s intended destination.

Network Configuring

ifconfig

route

/etc/nsswitch.conf

Network Diagnostics

netstat

host

dig

netcat / nc

nmap

tracepath

traceroute / traceroute6

ping / ping6

/etc/services

- Provides a human-friendly mapping between internet services, their ports, and protocol types.

- Each line describes one service:

service-name port/protocol [aliases ...]

Ports & Services

- SNMP listens on port 162 by default.

User and Group Files

/etc/passwd

- Contains information about users, their IDs, and basic settings like home directory and default shell.

- One line for each user account, with seven fields separated by colons.

- Username

- Password

xmeans the password is encrypted in the /etc/shadow file.

- UID

- GID

- Comment

- the user’s real name is generally stored here

- Home directory

- Default shell

- Examples:

root:x:0:0:root:/root:/bin/bashjeff:x:1000:1000:jeff,,,:/home/jeff:/bin/bash

/etc/shadow

- Contains encrypted passwords and information related to password/account expirations.

- One line for each user account, with nine fields separated by colons.

- Username

- Encrypted password

*means the account does not accept logins.!means the account has been locked from logging in with a password.!!means the password hasn’t been set yet.

- Last day the password changed (in days since January 1st, 1970).

- Min number of days to wait before a password change is allowed.

- Max number of days a password is valid for before a change is required.

- Password expiration occurs after this date.

- An expired password means the user must change their password to gain access again.

- Number of days to start showing warnings before the max date is reached.

- Number of inactive days allowed after password expiration.

- Account deactivation occurs after the inactive day is passed.

- A deactivated account requires a system admin to reinstate the account.

- Day when account expiration will occur (in days since January 1st, 1970).

- Reserved field that hasn’t been used for anything.

- Examples:

jeff:$9$eNcrYpt3D.23534e/ghlar2k.:17706:0:99999:7:::sshd:*:17706:0:99999:7:::

/etc/group

- Contains information about groups, their ID, and their members.

- One line per group, with four fields separated by colons.

- Group name

- Password

xmeans the password is encrypted in the/etc/gshadowfile.

- GID

- User list (comma separated)

- Examples:

jeff:x:1000:sambashare:x:126:jeff

User & Group Commands

- The first 100 UID and GIDs are reserved for system use.

0typically corresponds toroot.

- The first normal user account is usually assigned a UID of

500or1000. - User and group numbering limits are set in the

/etc/login.defsfile.UID_MINandUID_MAXdefines the minimum and maximum UID value for an ordinary user.GID_MINandGID_MAXwork similarly for groups.

chage

- chage can set the password expiration information for a user.

-llists current account aging details.-d/--lastdaysets the day that the password was last changed

(without actually changing the password).- Accepts a single number (representing the number of days since Jan 1st, 1970) or a value formatted in

YYYY-MM-DD. 0will force the user to change their password on the next login.

- Accepts a single number (representing the number of days since Jan 1st, 1970) or a value formatted in

-m/--mindayssets the number of days that must pass before a password can be changed.0disables any waiting period.

-M/--maxdayssets the number of days before a password change is required.- Accepts a single value for the number of days.

-1disables checking for password validity.

-W/--warndayssets when to start to displaying a warning message that a required password change is coming.-I/--inactivesets the number of days a password must be expired for the password to be marked inactive.- Accepts a single number.

-1removes an account’s inactivity.

-E/--expiredatesets the account expiration date.- Accepts a single number or

YYYY-MM-DDvalue. -1removes the expiration date.

- Accepts a single number or

- If no options are provided to

chage, it will interactively prompt for input to the various values it can set.

useradd / adduser (Debian)

- Doesn’t work as intended in Debian based distributions (because they’ve had a bug since forever and would rather you use a completely new command than get on board with standards… /rant), use

adduserinstead. - Creates new users or updates default new user details.

-D/--defaultsuse Default values for anything not explicitly specified in options.- Execute

useradd -Dwithout any other options to display the current defaults.GROUP=100

HOME=/home

INACTIVE=-1

EXPIRE=

SHELL=/bin/sh

SKEL=/etc/skel

CREATE_MAIL_SPOOL=no

- Execute

-d/--home-dirspecify home directory.-e/--expiredatesets the expiration date of the account.YYYY-MM-DD- Similar to

chage -E

-f/--inactivesets the number of days before making an account inactive after password expires.- Similar to

chage -I

- Similar to

-g/--gidgroup name or number for the user’s initial login group.- The group must already exist.

-G/--groupssupplementary groups to add the user to.- Groups are separated by commas with no white space.

-m/--create-homecreates the user’s home directory if it does not already exist.-M/--no-create-homeexplicitly specifies not to create the home directory for the user.- Overrides the

CREATE_HOME=yesvalue in/etc/login.defs, if set.

- Overrides the

-k/--skelspecifies the skeleton directory to use.- The

-moption must be used for this to work. - Without this option, it defaults to the

SKELvariable value in/etc/default/useradd.

- The

-K/--keysets UID_MIN, UID_MAX, UMASK, etc. KEY=VALUE option in the/etc/login.defsfile.-N/--no-user-groupdo not create a group with the same name as the user, but add the user to the group specified by the-goption.-o/--non-uniqueallow creation of a user account with a duplicate UID- Must use the

-uoption to specify the UID to use.

- Must use the

-p/--passwordthe encrypted password to use, as returned by thecryptcommand.- Not recommended to use due to plaintext password appearing in history.

-r/--systemcreate a reserved system account.- No aging information in /etc/shadow, UID/GID are generated in reserved range.

-s/--shellspecifies the user’s default shell. –- Default value is the SHELL variable in

/etc/default/useradd.

- Default value is the SHELL variable in

-u/--uidspecify the UID.-U/--user-groupexplicitly create a group with the same name as the user.

usermod

userdel

groupadd / addgroup (Debian)

groupmod

groupdel

newgrp

getent

getentdisplays the contents of various Name Service Switch (NSS) libraries.- Supported libraries:

- ahosts

- ahostsv4

- ahostsv6

- aliases

- ethers

- group

- gshadow

- hosts

- initgroups

- netgroup

- networks

- passwd

- protocols

- rpc

- services

- shadow

- Supported libraries:

Sudoers

- Access to the

sudocommand is configured in the/etc/sudoersfile. visudois the recommended command to edit the/etc/sudoersfile — as it locks the file from other’s editing it at the same time.- Syntax for entries in the sudoers file:

username hostname = TAG: /command1, /command2, [...]- Example:

ray rushmore = NOPASSWD: /bin/kill, /bin/ls, /usr/bin/lprm

- Tags:

PASSWD/NOPASSWD— require or not require the user to enter their password to usesudo.EXEC/NOEXEC— allow or prevent executables from running further commands itself.- Example, shell escapes will be unavailable in

viwithNOEXEC.

- Example, shell escapes will be unavailable in

FOLLOW/NOFOLLOW— allow or prevent opening a symbolic link file.MAIL/NOMAIL— whether or not mail is sent when a user runs a command.SETENV/NOSETENV— use the values ofsetenvor not on a per-command basis.

- Use of the

sudocommand is logged via syslog by default.

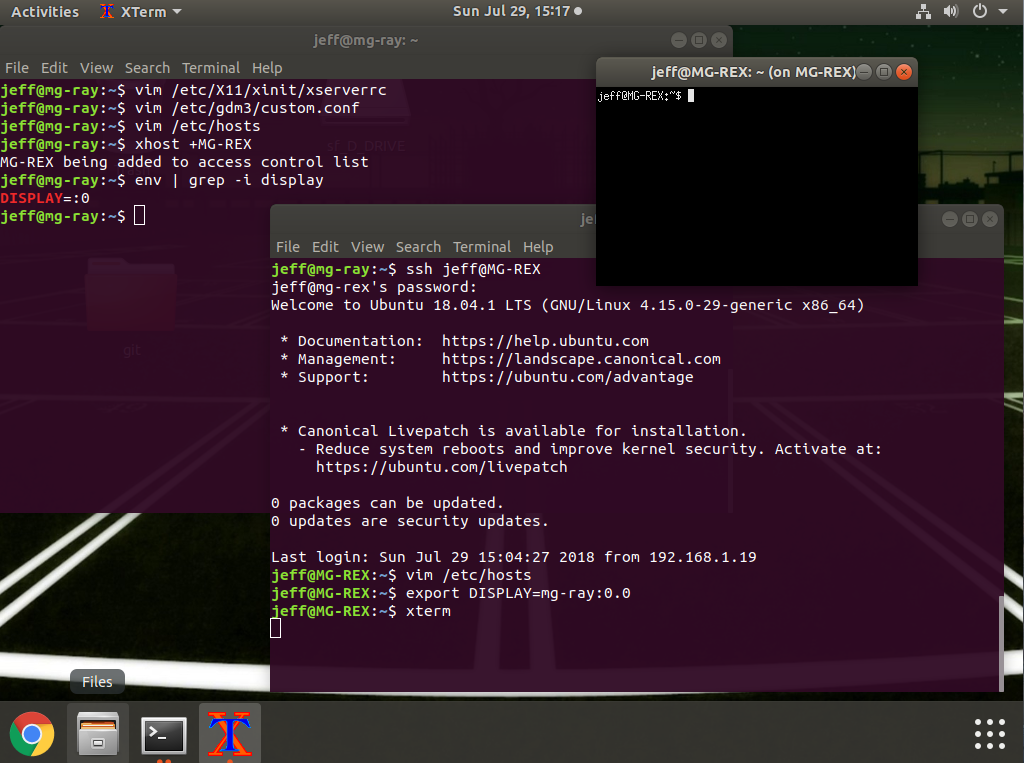

Preface

Generally it is recommended to forward X using SSH, as it provides an encrypted connection. However, on machines limited to a local network, tunneling through SSH results in a performance hit.

This article covers how to forward X without using SSH on Ubuntu 18.04.

Local machine = the machine you have access to and want to do work on.

Remote machine = the machine that will be running the applications.

Steps

- Enable X Server to listen via TCP on the local machine.

sudo vim /etc/X11/xinit/xserverrc- Change:

exec /usr/bin/X -nolisten tcp "$@" - To:

exec /usr/bin/X "$@"

- Change:

sudo vim /etc/gdm3/custom.conf- Add

Enable=trueunder the[xmdcp]section. - Add

DisallowTCP=falseunder[security]section.

- Add

- Restart X on the local machine.

- There are several ways to accomplish this, here are a couple of easy ones:

sudo reboot- Switch to a text mode and back to graphical mode:

sudo systemctl isolate multi-user.targetsudo systemctl isolate graphical.target

- There are several ways to accomplish this, here are a couple of easy ones:

- Add local hostname to hosts file on the remote machine.

sudo vim /etc/hosts- Add:

ip_address hostname [aliases]

- Add:

- Add remote hostname to authorized xhosts on local machine.

xhost +hostname

- Export the DISPLAY environment variable on the remote machine to that of the local machine.

export DISPLAY=hostname:0.0

- Launch an X application via SSH or telnet when connected to the remote machine, and it should open on the local machine.

Example Screenshot

How To

By default, the kernel decides when to make the console go blank / black (usually 10 minutes / 600 seconds).

To check the current value:

$ cat /sys/module/kernel/parameters/consoleblank

To temporarily update the value:

$ setterm -blank <value>

Note: If the value is 0, blanking will be disabled entirely.

To permanently update the value, use one of the following methods:

- Edit the Grub configuration files to include this kernel parameter:

consoleblank=0 - Create a startup script that contains:

setterm -blank <value>

CentOS 7

Changes to the kernel parameters can be made within the /etc/default/grub file, within the GRUB_CMDLINE_LINUX entry.

Example:

GRUB_CMDLINE_LINUX="[...] consoleblank=0"

To make this change effective:

# grub2-mkconfig -o /boot/grub2/grub.cfg

Note: If using EFI instead of MBR, use the following to make the change effective:

# grub2-mkconfig -o /boot/efi/EFI/centos/grub.cfg

CentOS 6

Changes to the kernel parameters can be made within the /etc/grub.conf file, within the kernel entry.

Example:

kernel [...] consoleblank=0

To make this change effective:

# grub-install <device/partition>

Note: Most of the time the device will be /dev/sda but always double check.

Ubuntu

Changes to the kernel parameters can be made within the /etc/default/grub file, within the GRUB_CMDLINE_LINUX_DEFAULT entry.

Example:

GRUB_CMDLINE_LINUX_DEFAULT="[...] consoleblank=0"

To make this change effective:

# update-grub > /boot/grub/grub.cfg

Booting Linux and Editing Files

Exam Objectives

- 101.2 – Boot the system

- 101.3 – Change runlevels and shutdown or reboot system

- 102.2 – Install a boot manager

- 103.8 – Perform basic file editing operations using vi

Installing Boot Loaders

The machine’s boot process begins with a program called a boot loader.

Boot loaders work in different ways depending on the firmware used and the OS being booted.

The most used boot loader for Linux is the Grand Unified Boot Loader (GRUB).

GRUB is available in two versions:

- GRUB Legacy (versions 0 – 0.97)

- GRUB 2 (versions 1.9x – 2.xx)

An older Linux boot loader also exists, called the Linux Loader (LILO).

Boot Loader Principles

The computer’s firmware reads the boot loader into memory from the hard disk and executes it.

The boot loader is responsible for loading the Linux kernel into memory and starting it.

Note: Although the exam objectives only mention the Basic Input/Output System (BIOS) firmware, the Extensible Firmware Interface (EFI) and Unified EFI (UEFI) are becoming increasingly important.

BIOS Boot Loader Principles

The BIOS boot process varies depending on its many options.

The BIOS first selects a boot device to use (hard disk, USB stick, etc.).

If a hard disk is selected, the BIOS loads code from the Master Boot Record (MBR).

The MBR is located within the first sector (512 bytes) of a hard disk. This 512 bytes is broken up into:

- Bootloader assembly code – 446 bytes.

- Partition table for four (4) primary partitions – 64 bytes (16 bytes each).

- Sentinel value – 2 bytes (with a value of

0xAA55if bootable).

The MBR contains the primary boot loader code.

The primary boot loader does one of two things:

- Examines the partition table, locates the partition that’s marked as bootable, and loads the boot sector from that partition to execute it.

- Locates an OS kernel, loads it, and executes it directly.

In the first instance, the boot sector contains a secondary boot loader, which ultimately locates an OS kernel to load and execute.

Linux’s most popular BIOS boot loaders (LILO and GRUB) can be installed in either the MBR or the boot sector of a boot partition.

Windows systems come with a boot loader that is installed directly to the MBR.

Note: Installing Windows alongside a Linux system will result in replacement of the MBR-based boot loader. To reactivate the Linux boot loader, Windows’ FDISK utility can be used to mark the Linux partition as the boot partition.

On an MBR partitioning system, a primary partition must be used for storing a Linux partition’s boot sector. If the boot sector is located within a logical partition it can only be accessed via a separate boot loader in the MBR or a primary partition.

On disks that use the GUID Partition Table (GPT) partitioning system, GRUB stores part of itself within a special partition, known as the BIOS boot partition. On MBR disks, the equivalent code is stored in the sectors immediately following the MBR (which are officially unallocated in the MBR scheme).

Note: Occasionally a reference is made to the “superblock” when discussing BIOS boot loaders. The superblock is part of the filesystem; and describes basic filesystem features, such as the filesystem’s size and status. On BIOS-based computers, the superblock may hold a portion of the boot loader, and damage to it can cause boot problems. The debugfs and dump2efs commands can provide some superblock information.

EFI Boot Loader Principles

EFI is much more complex than the older BIOS.

Instead of relying on code stored within the boot sectors of a hard disk, EFI relies on boot loaders stored as files in a disk partition known as the EFI System Partition (ESP) — which uses the File Allocation Table (FAT) filesystem.

Within Linux, the ESP is typically mounted at /boot/efi.

Inside of /boot/efi/EFI are subdirectories named after the OS or boot loader being used (ex. ubuntu, suse, fedora, etc.).

Those subdirectories contain boot loaders as .efi files.

For example, /boot/efi/EFI/ubuntu/grub.efi or /boot/efi/EFI/suse/elilo.efi.

This configuration allows the option to store a separate boot loader for each OS that is installed on the machine.

EFI includes a boot manager to help select which boot loader to launch.

Note: The exam objectives use the terms boot loader and boot manager interchangeably. A boot loader loads a kernel into memory and passes control to it. A boot manager presents a menu of boot options. GRUB (and other programs) combine both functions, which may be the reason why many sources don’t differentiate between the two terms.

Boot loaders must be registered in order for EFI to use them. This can be done by either using a utility built into the firmware’s own user interface or by using a tool such as Linux’s efibootmgr program.

Most x86-64 EFI implementations will use a boot loader called EFI/boot/bootx64.efi on the ESP as a default if no others are registered. Removable disks typically store their boot loader using this name as well.

GRUB Legacy

GRUB is the default boot loader for most Linux distributions.

Configuring GRUB Legacy

/boot/grub/menu.lst is the usual location for GRUB Legacy’s configuration file on a BIOS-based computer.

Some distributions (ex. Fedora, Red Hat, and Gentoo) use the filename grub.conf in place of menu.lst.

The GRUB configuration file can be broken into global and per-image sections.

Note: GRUB Legacy officially supports BIOS but not EFI. A heavily patched version, maintained by Fedora, provides support for EFI. If using this version of GRUB, its configuration file is located in the same directory on the ESP that houses the GRUB Legacy binary, such as /boot/efi/EFI/redhat for a standard Fedora or Red Hat installation.

GRUB Nomenclature and Quirks

The following is an example GRUB configuration file:

# grub.conf/ menu.lst

#

# Global Options:

#

default=0

timeout=15

splashimage=/grub/bootimage.xpm.gz

#

# Kernel Image Options:

#

title Fedora (3.4.1)

root (hd0,0)

kernel /vmlinuz-3.4.1 ro root=/dev/sda5 mem=4096M

initrd /initrd-3.4.1

title Debian (3.4.2-experimental)

root (hd0,0)

kernel (hd0,0)/bzImage-3.4.2-experimental ro root=/dev/sda6

#

# Other operating systems

#

title Windows

rootnoverify (hd0,1)

chainloader +1

In the above example, Fedora exists on /dev/sda5, Debian exists on /dev/sda6, and Windows exists on /dev/sda2. Debian and Fedora share a /boot partition on /dev/sda1, where the GRUB configuration resides.

GRUB doesn’t refer to disk drives by device filename the way Linux does. Instead, GRUB numbers drives (i.e. /dev/hda or /dev/sda becomes (hd0), and /dev/hdb or /dev/sdb becomes (hd1)).

Note: GRUB also doesn’t distinguish between PATA, SATA, SCSI, and USB drives. On mixed systems, ATA drives are typically given the lowest drive numbers, but that is not guaranteed.

GRUB Legacy’s drive mappings can be found in the /boot/grub/device.map file.

GRUB Legacy separates partition numbers from drive numbers with a comma. For example, (hd0,0) for the first partition of the first hard disk (typically /dev/sda1 or /dev/hda1 in Linux), and (hd0,4) for the first logical partition of the first hard disk (normally /dev/sda5 or /dev/hda5).

Global GRUB Legacy Options

GRUB’s global section precedes its per-image configurations.

Common options in the global section:

| Feature | Option | Description |

| Default OS | default=<num> |

Specifies a default OS for GRUB to boot.

Note: Index starts at 0. |

| Timeout |

|

The seconds GRUB will wait for user input before booting the default OS. |

| Background Graphic |

|

Sets the Note: The file and path are relative to the GRUB root partition. If |

Global GRUB Legacy Per-Image Options

By convention, GRUB Legacy’s per-image options are often indented after the first line.

The options start with an identification followed by options that tell GRUB how to handle the image.

Common options in the per-image section:

| Feature | Option | Description |

| Title | title <label> |

The label to display on the boot loader menu.

|

| GRUB Root | root <drive-nums> |

The location of GRUB Legacy’s root partition — which is the /boot partition if a separate partition is made for it.

ex. |

| Kernel Specification | kernel <path> <options> |

The location of the Linux kernel, and any kernel options to be passed to it. The Note: Because the |

| Initial RAM Disk | initrd <path> |

The <path> specifies the location of the initial RAM disk — which holds a minimal set of drivers, utilities, and configuration files that the kernel uses to mount its root filesystem before the kernel can fully access the hard disk.

Note: |

| Non-Linux Root | rootnoverify <drive-nums> |

Similar to the root option, but GRUB Legacy will not try to access files on this partition.

This option is used to specify a boot partition for operating systems that GRUB Legacy can’t directly load a kernel for, such as Windows. ex. |

| Chainloading | chainloader +<sector-num> |

Tells GRUB Legacy to pass control to another boot loader.

The |

Note: Chainloading on an EFI-enabled version of GRUB Legacy requires specifying the ESP as the root (typically root (hd0,0)), and passing the name of an EFI boot loader file (ex. chainloader /EFI/Microsoft/boot/bootmgfw.efi).

To add a kernel to GRUB:

- As

root, openmenu.lstorgrub.confin a text editor. - Copy a working configuration for a Linux kernel.

- Modify the

titleline with a unique name. - Modify the

kernelline to point to the new kernel, and specify any kernel options. - Make appropriate changes to the

initrdline (if adding, deleting, or changing aninitramfsRAM disk). - Change the global

defaultline to point to the new kernel (if desired). - Save changes and exit the text editor.

New kernel options in GRUB will appear in the menu after a reboot.

Installing GRUB Legacy

To install GRUB Legacy on a BIOS-based machine:

grub-install <device>

To install GRUB Legacy into the MBR (first sector of the first hard drive), <device> can be set with either a Linux or GRUB style device identifier (/dev/sda or '(hd0)').

To install GRUB Legacy into the boot sector of a partition instead, a partition identifier must be included with either the Linux or GRUB style device identifier (ex. /dev/sda1 or (hd0,0)).

To install Fedora’s EFI-enabled version of GRUB Legacy, copy the grub.efi file to a suitable directory in your ESP (ex. /boot/efi/EFI/redhat), copy grub.conf to the same location, and run the efibootmgr utility to add the boot loader to the EFI’s list:

# efibootmgr -c -l [[backslash backslash]]EFI[[backslash backslash]]redhat[[backslash backslash]]grub.efi -L GRUB

The above command adds GRUB Legacy, stored in the ESP’s /EFI/redhat directory, to the EFI’s boot loader list. Double backslashes ([[backslash backslash]]) must be used instead of Linux style forward slashes (/).

Note: If using Fedora’s grub-efi RPM file, the grub.efi file should be placed in this location by default.

Interacting with GRUB Legacy

GRUB Legacy will show a list of all of the operating systems that were specified with the title option in the GRUB configuration file.

If the timeout expires, a default operating system will be booted.

To select an alternative to the default, use the arrow keys to highlight the operating system desired and press the Enter key.

To pass additional options to an operating system:

- Use the

arrowkeys to highlight the operating system. - Press

eto edit the entry. - Use the

arrowkeys to highlight thekerneloption line. - Press

eto edit the kernel options. - Edit the

kernelline to add any options (such as1to boot to single-user mode)2. GRUB Legacy passes the extra option to the kernel. - Press

Enterto complete the edits. - Press

bto start booting.

Note: Any changes can be made during step 5. For example, if a different init program is desired, it can be changed by appending init=<program> (ex. init=/bin/bash) to the end of the kernel line.

Note2: To get to single-user mode when booting Linux, 1, S, s, or single can be passed as an option to the kernel by the boot loader.

GRUB 2

The GRUB 2 configuration file is /boot/grub/grub.cfg.

Note: Some distributions place the file in /boot/grub2 to allow simultaneous installations of GRUB Legacy and GRUB 2.

GRUB 2 adds features, such as:

- Support for loadable modules for specific filesystems and modes of operation.

- Conditional logic statements (enabling loading modules or displaying menu entries only if particular conditions are met).

The following is a GRUB 2 configuration file based on the previous example:

# grub.cfg

#

# Kernel Image Options:

#

menuentry "Fedora (3.4.1)" {

set root=(hd0,1)

linux /vmlinuz-3.4.1 ro root=/dev/sda5 mem=4096M

initrd /initrd-3.4.1

}

menuentry "Debian (3.4.2-experimental)" {

set root=(h0,1)

linux (hd0,1)/bzImage-3.4.2-experimental ro root=/dev/sda6

}

#

# Other operating systems

#

menuentry "Windows" {

set root=(hd0,2)

chainloader +1

}

Compared to GRUB Legacy, the important changes are:

titlechanged tomenuentry.- Menu titles are enclosed in quotes.

- Each entry has its options enclosed in curly braces (

{}). setis added before therootkeyword, and an=is needed to assign the root value to the partition specified.rootnoverifyhas been eliminated,rootis used instead.- Partition numbers start from

1rather than0. However, a similar change is not implemented for disk numbers.

Note: GRUB 2 also supports a more complex partition identification scheme to specify the partition table type (ex. (hd0,gpt2) for the second GPT partition, or (hd1,mbr3) for the third MBR partition).

GRUB 2 makes use of a set of scripts and other tools to help automatically maintain the /boot/grub/grub.cfg file.

Rather than edit the grub.cfg file manually, files in /etc/grub.d/ and the /etc/default/grub file should be edited. After making changes, the grub.cfg file should be recreated explicitly with one of the following (depending on OS):

update-grub > /boot/grub/grub.cfg

grub-mkconfig > /boot/grub/grub.cfg

grub2-mkconfig > /boot/grub/grub.cfg

Note: The update-grub, grub-mkconfig, and grub2-mkconfig scripts all output directly to STDOUT, which is why their output must be redirected to the /boot/grub/grub.cfg file manually.

Files in /etc/grub.d/ control particular GRUB OS probers. These scripts scan the system for particular operating systems and kernel, and add GRUB entries to /boot/grub/grub.cfg to support them.

Custom kernel entries can be added to the 40_custom file — enabling support for locally compiled kernels or unusual operating systems that GRUB doesn’t automatically detect.

The /etc/default/grub file controls the defaults created by the GRUB 2 configuration scripts.

To adjust the timeout:

GRUB_TIMEOUT=30

Note: A distribution designed to use GRUB 2, such as Ubuntu, will automatically run the configuration scripts after certain actions (ex. installation of a new kernel via the distribution’s package manager).

GRUB 2 is designed to work with both BIOS and EFI based machines.

Similar to GRUB Legacy, grub-install is run after Linux is installed to set up GRUB correctly.

Note: On EFI-based machines, the GRUB 2 EFI binary file should be placed appropriately automatically. However, if there are problems, efibootmgr can be used to fix them.

Alternative Boot Loaders

Although GRUB Legacy and GRUB 2 are the most dominant boot loaders for Linux (and the only ones covered on the exam), several other boot loaders are available:

Syslinux

The Syslinux Project (http://www.syslinux.org/) is a family of BIOS-based boot loaders, each of which is much smaller and more specialized than GRUB Legacy and GRUB 2.

The most notable member of this family is ISOLINUX, which is a boot loader for use on optical discs (which have unique boot requirements).

The EXTLINUX boot loader is another member of this family. It can boot Linux from an ex2, ext3, or ext4 filesystem.

LILO

The Linux Loader was the most common Linux boot loader in the 90s.

It works only on BIOS-based machines, and is quite limited and primitive by today’s standards.

If a Linux system uses LILO it will have a /etc/lilo.conf configuration file present on the system.

The Linux Kernel

Since version 3.3.0, the Linux kernel itself has incorporated an EFI boot loader for x86 and x86-64 systems.

On an EFI-based machine, this feature enables the kernel to serve as its own boot loader, eliminating the need for a separate tool such as GRUB 2 or ELILO.

rEFIt

Technically a boot manager, and not a boot loader.

It presents an attractive graphical interface, which allows users to select operating systems using icons rather than text.

It’s popular on Intel-based Macs, but some builds can be used on UEFI-based PCs as well.

This program can be found at http://refit.sourceforge.net/, but has been abandoned since development stopped in 2010.

rEFInd

A fork of rEFIt, designed to be more useful on UEFI-based PCs with extended features.

It also provides features that are designed to work with the Linux kernel’s built-in EFI boot loader, to make it easier to pass options required to get the kernel to boot.

The homepage for the project is http://www.rodsbooks.com/refind/.

gummiboot

An open-source EFI boot manager that’s similar to rEFIt and rEFInd, but uses a text-mode interface with fewer options.

The project page is http://freedesktop.org/wiki/Software/gummiboot.

Secure Boot

Microsoft requires the use of a firmware feature called Secure Boot, which has an impact on Linux boot loaders.

With Secure Boot enabled, an EFI-based machine will launch a boot loader only if it has been cryptographically signed by a key whose counterpart is stored in the computer’s firmware.

The goal of Secure Boot is to make it harder for malware authors to take over a computer by placing malware programs early in the boot process.

The problem for Linux is use of Secure Boot requires one of the following:

- The signing of a Linux boot loader with Microsoft’s key (since it’s the only one guaranteed to be on most machines).

- The addition of a distribution-specific or locally generated key to the machine’s firmware.

- The disabling of Secure Boot.

Currently, both Fedora and Ubuntu can use Secure Boot.

Note: It may be necessary to generate a key, or disable Secure Boot, to boot an arbitrary Linux distribution or a custom-built kernel.

The Boot Process

Extracting Information About the Boot Process

The kernel ring buffer stores some Linux kernel and module log information in memory.

Linux displays messages destined for the kernel ring buffer during the boot sequence (those messages that scroll by way too fast to be read).

To inspect the information in the kernel ring buffer:

# dmesg

Note: Many Linux distributions store the kernel ring buffer in /var/log/dmesg after the system boots.

Another important source for logging information is the system logger (syslogd), which stores log files in /var/log.

Some of the most important syslogd files are:

/var/log/messages/var/log/syslog

Note: Some Linux distributions also log boot-time information to other files. Debian uses a daemon called bootlogd that logs any messages that go to /dev/console to the /var/log/boot file. Fedora and Red Hat use syslogd services to log information to /var/log/boot.log.

The Boot Process

The boot process of an x86 machine from its initial state to a working operating system is:

- The system is powered on, and a special hardware circuit causes the CPU to look at a predefined address and execute the code stored in that location — which is the firmware (BIOS or EFI).

- The firmware checks hardware, configures it, and looks for a boot loader.

- When the boot loader takes over, it loads a kernel or chainloads another boot loader.

- Once the Linux kernel takes over, it initializes devices, mounts the root partition, and executes the initial program for the system — giving it a process ID (PID) of

1. By default, the initial program is/sbin/init.

Loading Kernels and initramfs

When the kernel is being loaded it needs to load drivers to handle the hardware, but those drivers may not yet be accessible if the hard drive isn’t mounted yet.

To avoid this issue, most Linux distributions utilize an initramfs file — which contains the necessary modules to access the hardware.

The boot loader mounts the initramfs file into memory as a virtual root filesystem for the kernel to use during boot.

Once the kernel loads the necessary drivers, it unmounts the initramfs filesystem and mounts the real root filesystem from the hard drive.

The Initialization Process

The first program that is started on a Linux machine (init) is responsible for starting the initialization process.

The initialization process is ultimately responsible for starting all programs and services that a Linux system needs to provide for the system.

There are three popular initialization process methods used in Linux:

- Unix System V (SysV)

- Upstart

- systemd

The original Linux init program was based on the Unix System V init program, and became commonly referred to as SysV.

The SysV init program uses a series of shell scripts, divided into separate runlevels, to determine what programs should run at what times.

Each program uses a separate shell script to start and stop the program.

The system administrator sets the runlevel at which the Linux system starts, which in turn determines which set of programs to run.

The system administrator can also change the runlevel at any time while the system is running.

The Upstart version of the init program was developed as part of the Ubuntu distribution.

Upstart uses separate configuration files for each service, and each service configuration file sets the runlevel in which the service should start.

This method makes it so that there is just one service file that’s used for multiple runlevels.

The systemd program was developed by Red Hat, and also uses separate configuration files.

Using the SysV Initialization Process

The key to SysV’s initialization process is runlevels.

The init program determines which service to start based on the current runlevel of the system.

Runlevel Functions

Runlevels are numbered from 0 to 6, and each one is assigned a set of services that should be active for that runlevel.

Note: While most systems only allow runlevels 0 to 6, some systems may have more. The /etc/inittab file will define all runlevels on a system.

Runlevels 0, 1, and 6 are reserved for special purposes; and the remaining runlevels can be used for whatever purposes the Linux distribution decides:

| Runlevel | Description |

0 |

Shuts down the system. |

1 (s, S, single) |

Single-user mode. |

2 |

Multi-user mode on Debian (and derivatives). Graphical login screen with X running. Most other distributions do not define anything for this runlevel. |

3 |

Multi-user mode on Red Hat, Fedora, Mandriva, etc. Non-graphical (console) login screen. |

4 |

Undefined typically, and available for customization. |

5 |

Multi-user mode on Red Hat, Fedora, Mandriva, etc. Graphical login screen with X running. |

6 |

Reboots the system. |

Identifying the Services in a Runlevel

One way to affect what programs run when entering a new SysV runlevel is to add or delete entries in the /etc/inittab file.

Basics of the /etc/inittab File

The entries within the /etc/inittab file follow a simple format.

Each line consists of four colon-delimited fields:

<id>:<runlevels>:<action>:<process>

| Field | Description |

| Identification Code | The <id> field consists of a sequence of one to four characters that identifies its function. |

| Applicable Runlevels | The <runlevels> field consists of a list of runlevels that applies for this entry (ex. 345 would apply to runlevels 3, 4, and 5). |

| Action to Take |

Specific codes in the See Example codes:

|

| Process to Run |

The Note: This field is omitted when using the |

The part of /etc/inittab that tells init how to handle each runlevel looks like:

l0:0:wait:/etc/init.d/rc 0

l1:0:wait:/etc/init.d/rc 1

l2:0:wait:/etc/init.d/rc 2

l3:0:wait:/etc/init.d/rc 3

l4:0:wait:/etc/init.d/rc 4

l5:0:wait:/etc/init.d/rc 5

l6:0:wait:/etc/init.d/rc 6

Each line begins with the letter l, followed by the runlevel number.

These lines specify scripts or programs that are to be run when the specific runlevel is entered.

In the above example, all scripts are the same (/etc/init.d/rc), but some distributions call specific programs for certain runlevels, such as shutdown for runlevel 0.

The SysV Startup Scripts

The /etc/init.d/rc or /etc/rc.d/rc script performs the crucial task of running all the scripts associated with the runlevel.

The runlevel-specific scripts are stored in one of the following locations:

/etc/init.d/rc?.d//etc/rc.d/rc?.d//etc/rc?.d/

The ? represents the runlevel number.

When entering a runlevel, rc passes the start parameter to all of the scripts with names that begin with a capital S, and it passes the stop parameter to all of the scripts with names that begin with a capital K.

These scripts are also numbered (ex. S10network, K35smb), and rc executes the scripts in numeric order — allowing distributions to control the order in which scripts run.

The files in the SysV runlevel directories are actually symbolic links to the main scripts, which are typically stored in one of the following locations:

/etc/init.d//etc/rc.d//etc/rc.d/init.d/

These original SysV startup scripts do not have the leading S or K and number (ex. smb instead of K35smb).

Note: Services can also be started and stopped by hand. For example, /etc/init.d/smb start will start the Samba server, and /etc/init.d/smb stop will stop the Samba server. Other options such as restart and status can be used as well.

Managing Runlevel Services

The SysV startup scripts in the runlevel directories are symbolic links back to the original script.

This prevents the need to copy the same script into each runlevel directory, and allows the user to modify the original script in just one location.

By editing the link filenames, a user can modify which programs are active in a runlevel.

Various utilities are available to help manage these links:

chkconfigupdate-rc.drc-update

chkconfig

To list the services and their applicable runlevels:

# chkconfig --list

The output will show each service’s runlevels with either an on or off state for each runlevel.

To check a specific service:

# chkconfig --list <service-name>

To modify the runlevels of a service:

# chkconfig --level <numbers> <service-name> <state>

<numbers> is the runlevels desired.

<state> is either on, off, or reset — which sets the value to its default value.

If a new startup script has been added to the main SysV startup script directory, chkconfig can be used to inspect the startup script for special comments that indicate default runlevels, and then add appropriate start and stop links in the runlevel directories:

# chkconfig --add <service-name>

Checking and Changing the Default Runlevel

On a SysV-based system, the default runlevel can be found by inspecting the /etc/inittab file and looking for initdefault.

An easy way to do this is with:

# grep :initdefault: /etc/inittab

id:3:initdefault:

To change the default runlevel for the next boot, edit the initdefault line in /etc/inittab.

Note: If a system lacks an /etc/inittab file, one can be created manually that only has an initdefault line to specify the desired default runlevel.

Determining the Current Runlevel

On a running system, the current runlevel can be found with:

# runlevel

The output will display either a number representing the system’s previous runlevel (ex. 5), or the letter N if no change has been made since boot time, followed by a number displaying the current runlevel.

An alternative option to finding the runlevel is:

# who -r

Changing Runlevels on a Running System

Changing runlevels on a running system can be done with the init, telinit, shutdown, halt, reboot, and poweroff commands.

Changing Runlevels with init or telinit

A user can have the system reread the /etc/inittab file to implement any changes made, or to change to a new runlevel.

To change to a specific runlevel:

# init <runlevel>

For example, rebooting can be done with init 6, and changing to single-user mode can be done with init 1.

A variant of init is telinit.

telinit works similarly to the way init does, but it also takes a Q / q option to reread the /etc/inittab file and implement any changes it finds:

# telinit q

Note: telinit is sometimes just a symbolic link to init, and in practice, init responds just like telinit to the Q / q options.

Changing Runlevels with shutdown

Rebooting or shutting down a machine with init can have problems:

- The command is unintuitive for these actions.

- The action is immediate and provides no warning to other users.

The shutdown command is preferred in this case:

# shutdown [<options>] <time> [<message>]

The most common options are:

| Option | Description |

-r |

Reboot |

-H |

Halt (terminate operation but do not power off). |

-P |

Power off |

-c |

Cancel pending shutdown |

The <time> parameter can set with:

nowhh:mm(a time on a 24-hour clock)+<minutes>

The optional <message> is placed within double quotes, and will display a message to all logged in users.

Note: The messages are sent using the wall command behind the scenes. This command can be used manually by either piping output into it (ex. echo "this is a message" | wall), or by entering the wall command, followed by the text to display, and finally a ^d character.

Once the desired time is reached, shutdown will run init to set the appropriate runlevel.

Note: If shutdown is run without any options it will change runlevel to 1 (single-user mode).

Changing Runlevels with halt, reboot, and poweroff

halt will terminate operation without powering down.

reboot will restart the system.

poweroff will terminate operation and power down.

Note: In most cases, reboot and poweroff are symbolic links to halt.

Using the systemd Initialization Process

The systemd initialization process is becoming the preferred method in the Linux world; and is currently the default option for Red Hat, Fedora, CentOS, etc.

Instead of using many small initialization shell scripts, systemd uses one big program that uses individual configuration files for each service.

Units and Targets

Instead of using shell scripts and runlevels, the systemd method uses units and targets.

A systemd unit defines a service or action on the system

Each unit consists of a name, a type, and a configuration file.

There are eight different types of systemd units:

automountdevicemountpathservicesnapshotsockettarget

The systemd program identifies units by their name and type using the format: name.type.

The systemctl command can be used to list the units currently loaded in a Linux system:

# systemctl list-units

The systemd method uses service-type units to manage the daemons on the Linux system.

The target-type units are important in grouping multiple units together, so that they can be started at the same time (ex. network.target groups all units required to start the network interfaces for a system).

The systemd initialization process uses targets similarly to the way SysV uses runlevels.

Each target represents a different group of services that should be running on the system.

Instead of changing runlevels to alter what is running on a system, a user can change targets.

Note: To make the transition from SysV to systemd easier, there are targets that mimic the standard 0 to 6 SysV runlevels, called runlevell0.target to runlevell6.target.

Configuring Units

Each unit requires a configuration file that defines what program it starts and how it should start the program.

The systemd system stores unit configuration files in /lib/systemd/system/.

This is an example configuration file for a sshd.service file on CentOS 7:

[Unit]

Description=OpenSSH server daemon

Documentation=man:sshd(8) man:sshd_config(5)

After=network.target sshd-keygen.service

Wants=sshd-keygen.service

[Service]

Type=forking

PIDFile=/var/run/sshd.pid

EnvironmentFile=/etc/sysconfig/sshd

ExecStart=/usr/sbin/sshd $OPTIONS

ExecReload=/bin/kill -HUP $MAINPID

KillMode=process

Restart=on-failure

RestartSec=42s

[Install]

WantedBy=multi-user.target

Here is a brief breakdown of some of the lines in the above example file:

ExecStart defines which program to start.

After specifies what services should run before the sshd service starts.

WantedBy defines what target level the system should be in to run the service.

Restart determines what conditions need to be present to trigger reloading the program.

Target units also use configuration files — defining which service units to start.

This is an example of the graphical.target configuration file on CentOS 7:

[Unit]

Description=Graphical Interface

Documentation=man:systemd.special(7)

Requires=multi-user.target

Wants=display-manager.service

Conflicts=rescue.service rescue.target

After=multi-user.target rescue.service rescue.target display-manager.service

AllowIsolate=yes

To breakdown the above example file:

After determines which targets should be loaded first.

Requires defines what targets are required for this target to start.

Conflicts states which targets conflict with this target.

Wants sets the required targets or services this target needs in order to run.

Setting the Default Target

The default target used when the system boots is defined in the default.target file of the /etc/systemd/system/ directory.

The systemd program looks for this file whenever it starts up.

Normally this file is a link to a standard target file in the /lib/systemd/system/ directory. For example:

[root@mg-ray-centos7 system]# ls -al default.target

lrwxrwxrwx. 1 root root 16 Dec 5 2016 default.target -> graphical.target

The systemctl Program

The systemctl program is used to control services and targets within the systemd method.

systemctl accepts several commands to define what action it will take:

| Command | Description |

list-units |

Displays the current status of all configured units. |

default |

Changes the default target unit. |

isolate |

Starts the named unit and stops all others. |

start <name> |

Starts the named unit. |

stop <name> |

Stops the named unit. |

reload <name> |

The named unit reloads its configuration file. |

restart <name> |

Stops and starts the named unit. |

status <name/PID> |

Displays the status of the named unit. |

enable <name> |

Configures the unit to start on boot. |

disable <name> |

Prevents the unit from starting on boot. |

To change the target that is currently running, use the isolate command:

# systemctl isolate rescue.target

# systemctl isolate graphical.target